Job_description = browser.find_elements_by_class_name('jobs-search_right-rail').

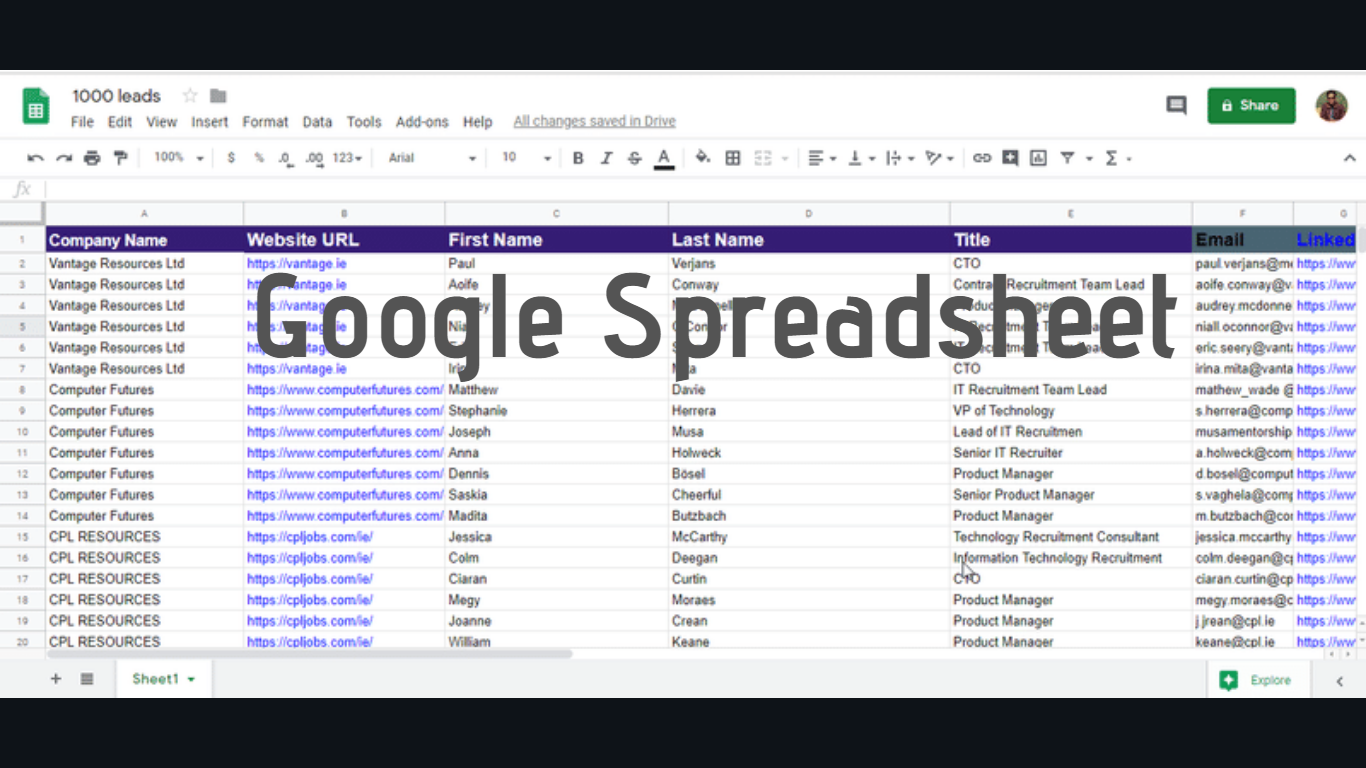

Job_ids.append(job_container.get_attribute("data-job-id"))įunction to grab the descriptions def get_descriptions(browser, job_ids):īrowser.get(f'&keywords=software%20developer') Job_containers = browser.find_elements_by_class_name('job-card-container relative job-card-list job-card-container-clickable job-card-list-underline-title-on-hover jobs-search-results-list_list-item-active jobs-search-two-pane_job-card-container-viewport-tracking-0') # Example of scraping the currentJobId for each item. So you can either emulate a click on the container or change the currentJobId by scraping the id from the page and reloading the page with the new link. When you click another page, it changes the job id. This link, by default, has the first job selected. The problem has to do with how the pages are loaded.Įvery time you click a new Job container, it sends a different GET request to the server. Job_description=browser.find_elements_by_class_name('jobs-search_right-rail') I've successfully been able to pull out (1) description, but haven't been able to pull out the other descriptions of the remaining (24) jobs. # At this point, I am trying to iterate over each of the (25) jobs to pull out the description. Job_location=browser.find_elements_by_class_name("job-card-container_metadata-item") # This will scrape and display (25) location names from page (1) - correspondent to company_title and company_name above Job_company=browser.find_elements_by_class_name("job-card-container_company-name") # This will scrape and display (25) company names from page (1) - correspondent to company_title above The user associated with the slack account and channel will get an email as a notification with the job links and short job description. The scraper searches the StackOverflow website for specific jobs and sends notifications to slack.

Job_title=browser.find_elements_by_class_name("job-card-list_title") The web scraper is built in Python, and packaged in a docker container. # This will scrape and display (25) job titles from page (1) # This is the URL to test the jobs I want to scrape from Login_button=browser.find_element_by_class_name("sign-in-form_submit-button") # Once username and password are entered, this will automatically click the submit button to login into LinkedIn Password=browser.find_element_by_id("session_password") Username=browser.find_element_by_id("session_key") # Requires user to enter username and password # This will open a new Chrome page to test specified url on (for scraping)īrowser=webdriver.Chrome("My Chrome Path")

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed